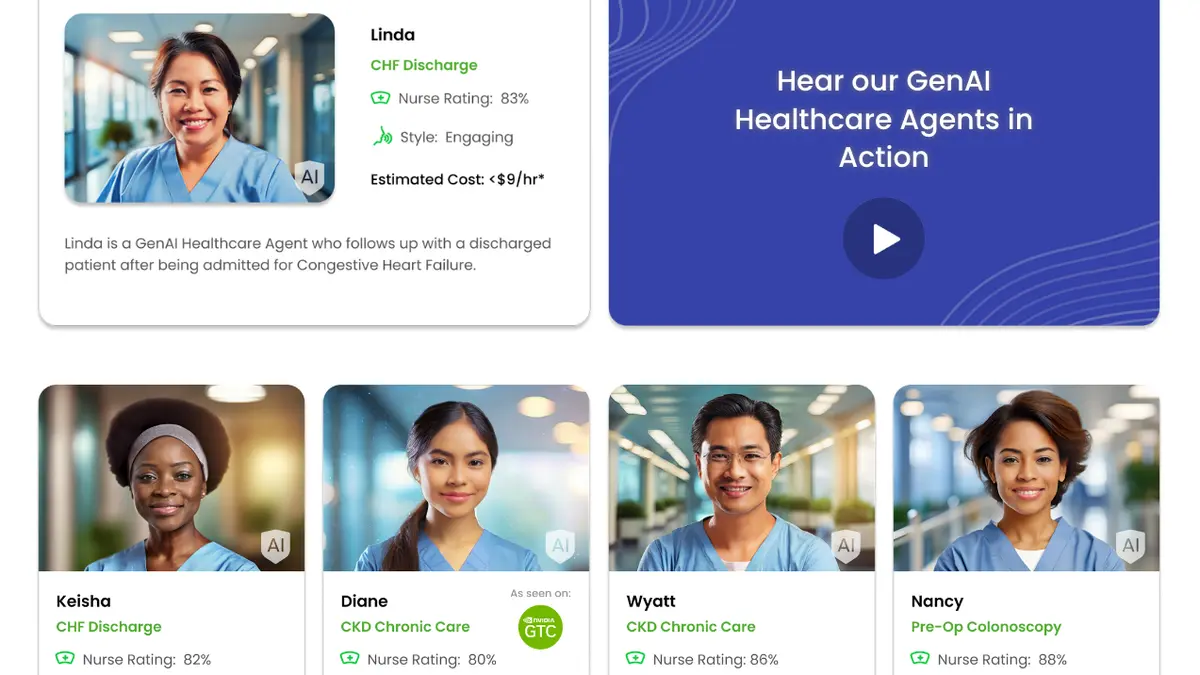

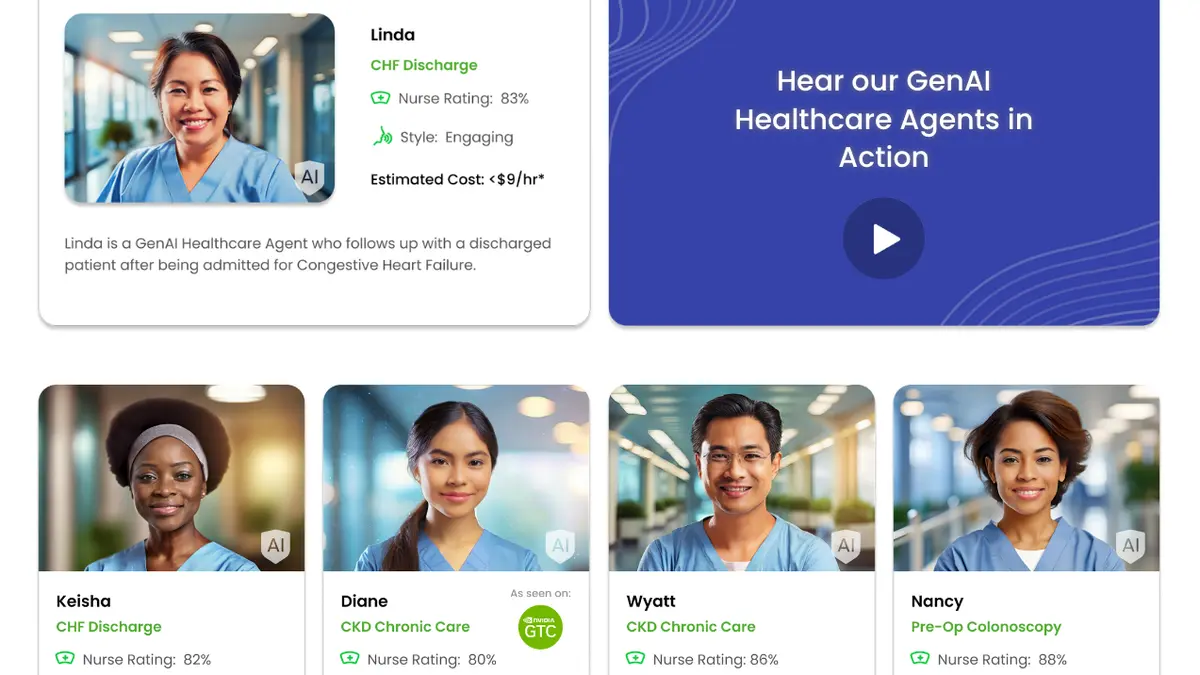

Nvidia Wants to Replace Nurses With AI for $9 an Hour

Nvidia Wants to Replace Nurses With AI for $9 an Hour

gizmodo.com

Nvidia Wants to Replace Nurses With AI for $9 an Hour

Nvidia Wants to Replace Nurses With AI for $9 an Hour

Nvidia Wants to Replace Nurses With AI for $9 an Hour